Customer centricity fails in banks for a reason that is rarely acknowledged directly: it is almost never measured, it is inconsistently governed, and it is rarely reinforced by the incentives that actually shape behavior. The framework gets designed, the training gets delivered, and then the organization slowly returns to its previous operating logic. Exceptions accumulate. Old habits reassert themselves. And what was positioned as a strategic shift quietly becomes another initiative that produced presentations but not results.

If customer centricity is genuinely a risk and performance strategy, and the previous articles in this series have made the case that it is, then it needs to be treated with the same seriousness that banks apply to credit risk or capital management. It needs to be measured against outcomes, governed with a consistent rhythm, and supported by incentives that point in the same direction as the methodology. Without those elements, even the best-designed framework will not survive sustained contact with operational reality.

That is the focus of this final article.

Why Measurement Is the Missing Link

Most banks are very good at measuring activity.

They track volumes, turnaround times, product penetration rates, and utilization. What they measure far less reliably is whether customer-centric lending is actually working., whether the diagnostic conversations are producing useful insight, whether the solutions being structured are genuinely aligned with client problems, and whether the portfolio is performing better as a result

.A customer-centric model demands outcome-oriented metrics, across three dimensions:

Client Outcomes

The first is client outcomes: whether cash-flow stability has improved over time, whether volatility has reduced following the financing intervention, whether the financing structure is genuinely aligned with the client’s business cycle, and whether the client has demonstrated resilience when conditions have been stressed. These indicators answer the question that actually matters: did the solution help?

Portfolio outcomes

How does default and migration behavior vary by problem archetype, how frequently exceptions occur and how they perform relative to standard cases, whether early-warning signals are proving accurate, and how concentration and volatility trends are evolving. This is where customer centricity either demonstrates its impact on risk quality or fails to. Banks that track these metrics systematically gain a capability for portfolio steering that product-centric models simply do not offer,

Institutional Learning

how frequently solution toolkits are being reused, whether discovery inputs are improving in quality and consistency over time, which archetypes show persistent underperformance, and whether the feedback from outcomes is actually influencing the design of archetypes and toolkits. Customer centricity only becomes powerful when institutions learn from it systematically. Metrics are what make that learning possible.

Dashboards That Drive Behavior, Not Decoration

Dashboards are often designed for reporting. Customer-centric dashboards must be designed for decision-making.

An effective dashboard shows the gap between expected and actual outcomes, highlights deviations early enough to be actionable, and connects current results back to the original diagnostic logic that shaped the solution. It needs to be simple enough to be reviewed regularly by people who have other demands on their attention, and specific enough to trigger a concrete response when something is off track. If a dashboard does not change behavior, it is serving an administrative function rather than a management one.

Governance: Where Customer Centricity Lives or Dies

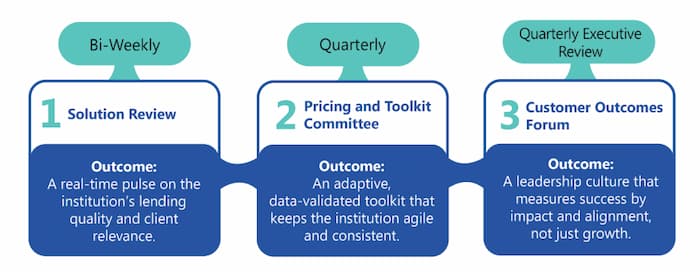

Customer centricity cannot be governed once a year. It requires a rhythm. A practical governance model typically operates at three levels:

i. Bi-Weekly Operational Reviews

At the front-line level, bi-weekly operational reviews focus on new cases coming through the pipeline , the quality of discovery inputs, the appropriateness of archetype selection, emerging exceptions, and any friction in the process that needs to be addressed. These sessions reinforce discipline precisely where it matters most: at the point where client situations are being diagnosed and structured.

ii. Monthly Portfolio Reviews

Monthly portfolio reviews operate at a higher level of aggregation, focused on performance by archetype, deviation trends, early-warning indicators, and structural weaknesses in how certain client situations are being handled. This is where patterns become visible that are not apparent at the individual case level, and where the learning begins to accumulate into something that can improve future decisions.

iii. Quarterly Steering Committees

Quarterly steering committees address the longer-term questions: adjusting archetypes and toolkits based on observed performance, refining risk appetite parameters as the portfolio evolves, reallocating focus across segments as opportunities and risks shift, and aligning incentives with what the framework actually requires. At this level, customer centricity is treated as a managed strategic capability rather than a front-office initiative.

Incentives: The Hard Truth

No operating model survives incentives that point in a different direction. This is the hardest part of sustaining customer centricity, and it is where the most honest conversations need to happen. If relationship managers are rewarded primarily for volume, discipline will lose to volume every time, not because people are cynical, but because they respond rationally to what they are measured on.

Customer-centric incentives do not require abandoning targets or introducing subjectivity into bonus calculations. They require balanced scorecards that treat the quality of the lending process as a genuine performance dimension alongside volume. That means measuring the quality of discovery conversations, the adherence to methodology, the performance of portfolios over time, and the responsible management of exceptions. When incentives reflect outcomes rather than just activity, behavior follows, gradually but reliably.

The institutions that struggle most with this are often the ones that treat it as a soft culture question rather than a hard design question. Incentive architecture is not soft. It is the most direct lever available for shaping how an operating model actually functions in practice.

Why a 60-Day Rollout Beats Multi-Year Programs

One of the most reliable ways to kill a good idea in a bank is to turn it into a multi-year transformation programme. The timelines create distance between design and feedback. The scope creates dependencies that slow everything down. The political complexity generates opposition that accumulates faster than results. And by the time the programme is supposed to be delivering, the original sponsors have moved on and the institutional memory of why it mattered has faded.

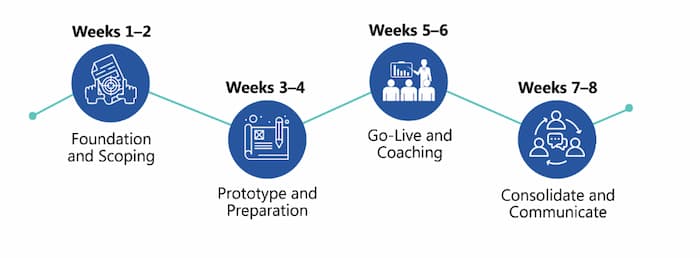

Customer centricity works better when it is rolled out fast and visibly, in a way that generates evidence quickly and creates momentum from demonstrated results rather than promised ones. A pragmatic 60-day rollout is designed around that logic. It typically follows four phases:

i. Days 1–15: Focus and Design

The first two weeks focus on selection and design: choosing one priority SME segment, defining an initial set of problem archetypes, agreeing on solution toolkits, and aligning on risk appetite boundaries. The goal at this stage is not completeness. It is clarity, enough clarity to run real cases through the model with confidence.

ii. Days 16–30: Tooling and Enablement

The following two weeks focus on making the model usable: configuring discovery scripts, setting up dashboards, training a pilot group of relationship managers, and establishing the governance rhythm. Nothing needs to be perfect at this stage. It needs to be functional.

iii. Days 31–45: Live Piloting

Weeks five and six involve live piloting: running real client situations through the framework, capturing the friction points and deviations that theory could not anticipate, adjusting archetypes and toolkits based on what is actually encountered, and testing the governance forums against real decisions. Learning accelerates sharply here, precisely because the cases are real.

iv. Days 46–60: Review and Scale Decision

The final two weeks are for review and decision. The evidence gathered during the pilot is assessed against the outcome metrics established at the outset. Portfolio behavior is examined. And a deliberate decision is made: scale the model, refine it further, or stop. By this point, customer centricity is no longer a theoretical proposition. It has either demonstrated its value or it has not, and the institution has the evidence to know which.

Proof Before Perfection The discipline of a 60-day rollout creates credibility. It forces:

Focus over breadth

Evidence over opinion

Learning over ideology

Guiding Principles for a Light Rollout

Banks that succeed with customer centricity do not announce it. They demonstrate it, in one segment, with one cohort, over a defined period and then scale from a position of demonstrated value rather than strategic intent.

Banks that succeed with customer centricity do not announce it. They demonstrate it, in one segment, with one cohort, over a defined period and then scale from a position of demonstrated value rather than strategic intent.

Customer Centricity as a System, Not a Project

Taken together, the six articles in this series have described a complete architecture. Customer centricity begins with a diagnostic methodology: problem archetypes that give client situations a consistent language, discovery conversations that turn dialogue into insight, and solution toolkits that translate diagnosis into structured, risk-aligned responses. It is supported by an operating model — Solution Pods and cross-functional collaboration — that makes the methodology scalable without requiring organizational upheaval. AI enforcement ensures that discipline is maintained consistently as volume grows. And metrics, governance, and incentives create the conditions for the system to be self-sustaining rather than dependent on sustained management attention.

When these elements align, customer centricity becomes self-reinforcing. When any one of them is missing, the system quietly degrades. The architecture has to be complete to be durable.

Closing the Series

This final article completes the Customer Centricity series. We have moved from:

Philosophy → execution

Intent → discipline

Conversations → outcomes

The conclusion is simple:

The most successful SME lenders of the next decade will combine empathy with evidence and discipline with partnership. Customer centricity is not about being nicer to clients. It is about running a better bank.

Final Thought

Customer centricity does not fail because it is too idealistic. It fails when it is not measured, governed, and reinforced. When it is, it becomes one of the most powerful risk and performance strategies in modern banking.

Setting the Stage for the Series

This article is part of Q-Lana’s six part Customer centricity on how modern SME lenders turn fragmented information into decision intelligence.

The complete framework, includes the articles on:

How customer centricity become scalable without reorganizing the bank

Artificial intelligence must enforce discipline, not replace judgement, and

How customer centricity becomes measurable, enforced and self sustaining

The full content in a more detailed version is available in Q-Lana’s Customer Centricity in SME and Corporate Lending Whitepaper.

Stay in the Loop

We regularly share actionable lessons and proven approaches from our Customer Centricity series, covering implementation examples, AI support, and business models from real SME lending work.

Subscribe to Our Newsletter covering data domains, architecture, governance, and lessons from real SME lending work.

____

Would you like to learn more? Contact us for a demo.