Artificial Intelligence is often presented as the solution to everything that is slow, inconsistent, or costly in SME lending; Smarter credit decisions, automated approvals, predictive risk management, and relationship managers replaced by algorithms.

This narrative is seductive and dangerous. In SME and corporate lending, the real challenge is not a lack of intelligence. It is a lack of discipline.

Customer centricity, risk appetite, and professional judgment already exist in most institutions, but they are applied inconsistently. AI’s real value lies not in inventing new logic, but in enforcing the logic banks already claim to follow.

That is where AI belongs.

The Real Problem: Methodology Drift

Most banks have credit policies, risk appetite frameworks, customer segmentation models, and relationship management guidelines. What anyone who has reviewed real portfolios also knows is that these things drift. Not dramatically, not usually through deliberate choice, but quietly and persistently over time.

Drift happens when processes rely on memory rather than structure. It happens when exceptions accumulate without being tracked. It happens when the pressure for speed overrides the discipline that slows things down, and then when the “temporary” workaround becomes the way things are done. The consequences are familiar: decisions that are hard to compare, exceptions that are invisible to governance, risk that is detected later than it should be, and portfolios that behave differently from what the models predicted.

No amount of predictive AI addresses this. What is needed first is methodology enforcement, and that is precisely where AI can contribute most reliably in a customer-centric lending model..

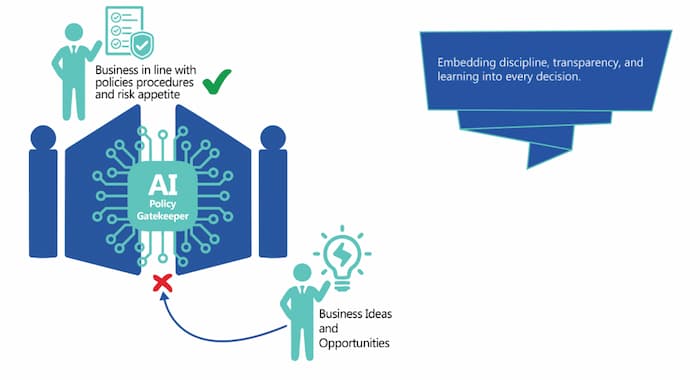

AI’s Proper Role: Guardrails, Not Autopilot

The appropriate role for AI in customer-centric SME lending is not to make decisions. It is to act as a guardrail system: ensuring that required steps are followed, checking consistency against defined standards, surfacing deviations early, and documenting decisions in a way that makes them traceable and governable. This is less glamorous than the autopilot narrative. It is also far more useful.

A guardrail system that reliably catches the moment when a discovery conversation skips a critical lens, or when a proposed structure sits outside the pre-approved toolkit, or when an exception is being processed without adequate documentation, that system creates real value. It does so quietly, consistently, and without requiring any fundamental redesign of how lending professionals think and work.

In other words: AI enforces discipline at scale. This is less glamorous than “AI credit decisions,” but far more effective.

Where AI Enforces Methodology Across the Journey

AI enforcement is not a single feature. It operates quietly across the entire customer journey.

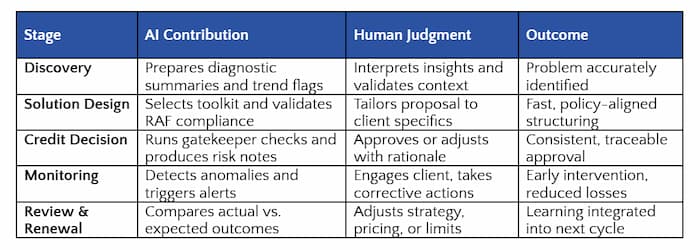

i. Enforcing Structured Discovery

After a discovery conversation, AI can check whether all five diagnostic lenses were addressed, flag inputs that are missing or internally inconsistent, and compare qualitative statements against available data indicators. The relationship manager remains entirely in control of the conversation and the conclusions drawn from it. AI simply ensures that nothing critical was inadvertently skipped, and does so consistently, regardless of experience level or time pressure. This alone dramatically improves consistency and early-warning quality.

ii. Enforcing Problem Archetype Discipline

When a client situation is mapped to a problem archetype, AI can validate whether the selected archetype is consistent with the observed signals, flag cases where the choice appears to deviate from typical patterns, and require explicit justification when an outlier classification is proposed. This prevents the common failure mode in which clients are quietly forced into convenient categories that fit the available solution rather than the actual situation. AI does not choose the archetype. It ensures the choice is defensible.

iii. Enforcing Solution Toolkit Alignment

At the solution structuring stage, AI checks whether the proposed structure fits within the selected toolkit, verifies compliance with relevant Risk Appetite Framework parameters, and surfaces implicit exceptions before they become explicit ones in an audit finding months later. The practical effect is that risk discussions move upstream, which is where they belong and where they are most useful.

iv. Enforcing Transparency in Exceptions

Exceptions are inevitable in SME lending. What is not inevitable is that they go undocumented, unmeasured, and unlearned from. AI can require explicit classification of exceptions, capture the stated rationale in structured form, track exception patterns by archetype, by relationship manager, and by segment, and feed that information into governance forums where it can be acted on. Exceptions stop being invisible. They become managed risk decisions with a paper trail.

v. Enforcing Follow-Up and Monitoring

Customer centricity does not end at disbursement. After disbursement, AI continues to work: linking monitoring indicators to the original diagnosis, flagging when expected improvements fail to materialize within the expected timeframe, prompting timely client reviews, and escalating deviations based on pre-agreed thresholds. This closes the diagnostic loop and makes customer centricity something that continues to function between annual reviews rather than disappearing the moment the deal is signed

Summary of how human expertise and AI enforcement complement each other at each stage of the customer lifecycle:

This closes the loop: diagnosis → solution → monitoring → learning.

Explainability Is Not Optional

One design principle is non-negotiable: every AI output in this context must be explainable in plain terms to the people who are accountable for the decisions it influences. If a relationship manager cannot explain why a flag was raised, if a risk officer cannot trace how a recommendation was generated, if an auditor cannot follow the logic from input to output, then AI has become a liability rather than an asset.

In a regulated environment, opaque models are not a technical inconvenience. They are a governance failure. AI enforcement must be transparent, traceable, and understandable to non-technical users. This is not a constraint that limits what AI can do. It is a design requirement that determines whether AI can be trusted, and in lending, trust is the foundation everything else rests on.

Why AI Enforcement Improves Risk and Culture

When implemented with these principles, AI enforcement does something more interesting than improving individual decisions. It changes the culture around decision-making. Not through fear or external pressure, but through clarity. People know what is expected, where the boundaries are, and when a justification is required. That clarity, applied consistently, shifts the implicit question in internal discussions from “Can we get this through?” to “Is this the right decision?”

Over time, discipline increases and exceptions decrease, not because they are forbidden, but because the process of making them explicit makes people more thoughtful about when they are genuinely warranted. AI becomes a cultural amplifier: it reinforces good habits and surfaces weak ones, at a scale that management attention alone cannot achieve.

What AI Should Never Do

It is worth being equally explicit about the boundaries. AI should not make final credit decisions. It should not override professional judgment. It should not hide its logic behind outputs that cannot be explained. And it should not be sold to institutions as an “automatic risk reduction” capability, because that framing creates accountability gaps that tend to become visible at exactly the wrong moment.

Banks that pursue these shortcuts pay for them eventually, in losses, in regulatory findings, or in reputational damage that is difficult to reverse. AI does not absorb responsibility. Used correctly, it makes responsibility more visible, more traceable, and ultimately more manageable.

Optional Enhancement, Not a Prerequisite

One clarification worth being precise about: the customer-centric methodology described in this series does not depend on AI to function. Disciplined processes, structured conversations, clear risk appetite, and consistent governance, these work without AI, and they are what AI needs to be useful. AI enhances the model once the foundations are in place. It does not create those foundations.

At Q-Lana, AI capabilities are developed in controlled settings, using institution-specific data, under explicit governance frameworks, and in close collaboration with the institutions that use them. These are not off-the-shelf products. They are carefully embedded capabilities that sit on top of a methodology that has been designed first.

Why This Matters Now

As portfolios grow and teams expand, the structural challenge of maintaining discipline increases rather than decreasing. Manual controls do not scale. Institutional memory does not scale. Heroic individual effort does not scale. What scales is a system that enforces methodology quietly and consistently as volume increases, not by replacing the people working within it, but by protecting the principles they are meant to be working within.

Q-Lana gives relationship managers and credit teams the data structure to make customer-centric decisions at scale.

Setting the Stage for the Final Step

This article focused on how AI enforces methodology: quietly, consistently, and transparently. In the final article of the series, we will look at the last piece:

how metrics, governance, and incentives make customer centricity self-sustaining, and prove that it improves both impact and profitability.

About This Series

This article is part of Q-Lana’s Customer Centricity Series, based on the whitepaper

“Customer Centricity in SME and Corporate Lending: From Products to Problem-Solving.”

The full whitepaper provides detailed governance models, AI design principles, and rollout guidance and is available for download separately.

Closing Thought

AI will not fix weak lending cultures. But used correctly, it will enforce discipline where intentions are already good – and expose gaps where they are not. That is its real power in customer-centric SME lending.

Setting the Stage for the Series

This article is part of Q-Lana’s six part Customer centricity on how modern SME lenders turn fragmented information into decision intelligence.

The complete framework, includes the articles on:

How customer centricity becomes scalable without reorganizing the bank

Artificial intelligence must enforce discipline, not replace judgement, and

How customer centricity becomes measurable, enforced and self sustaining

The full content in a more detailed version is available in Q-Lana’s Customer Centricity in SME and Corporate Lending Whitepaper.

Stay in the Loop

We regularly share actionable lessons and proven approaches from our Customer Centricity series, covering implementation examples, AI support, and business models from real SME lending work.

Subscribe to Our Newsletter covering data domains, architecture, governance, and lessons from real SME lending work.

____

Would you like to learn more? Contact us for a demo.